Artificial intelligence is not a tool of concealment; it is an instrument of revelation, and in that revelation lies both strategic clarity and existential discomfort. Systems once protected by opacity now confront a form of engineered transparency that rewards operational integrity while punishing structural fragility. Leaders who believed complexity insulated them now face the paradox of exposed resilience, where scale amplifies both strength and weakness in equal measure. This is disciplined illumination, yet destabilising exposure. The central tension is unavoidable: organisations must protect competitive advantage while accepting that inefficiency will no longer remain hidden. The implication is immediate and non-negotiable: concealment strategies collapse under algorithmic scrutiny. A system designed to optimise cannot ignore contradiction, and contradiction now sits at the core of many institutions. The outcome is stark; either redesign the system deliberately or watch it be diagnosed in real time.

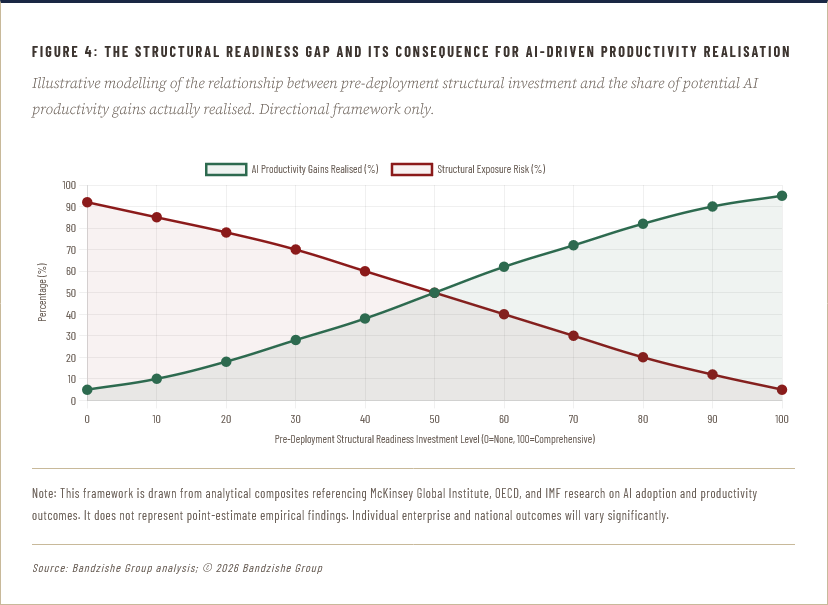

The economic dimension sharpens this reality; productivity gains derived from AI are contingent upon the removal of inefficiencies that institutions have historically tolerated. The contradiction is acute: leaders demand acceleration while preserving legacy constraints. This is managed acceleration and inherited inertia. Evidence from global productivity analyses consistently indicates that technology adoption without organisational redesign produces marginal gains rather than systemic improvement. The lesson is unambiguous; optimisation without structural reform is cosmetic. For South African enterprises, this tension is intensified by legacy infrastructure constraints and uneven digital maturity, which convert technological adoption into a test of institutional coherence rather than mere capability. The question is unavoidable: can leadership tolerate the exposure required for genuine progress? The answer determines not technological success but institutional survival.

Any institution that deploys artificial intelligence without first engineering structural strength is not accelerating towards prosperity; it is accelerating towards a far more public and far more consequential collapse. The diagnostic is already running. The question is whether you will read its findings before the market does.

Approximately once a generation, a technology arrives that its proponents describe as a force of liberation, and its critics dismiss as a force of disruption; both are wrong, and both miss the point with remarkable consistency. Artificial intelligence is neither a saviour nor a destroyer; it is, at its core, an instrument of merciless clarity. It does not create institutional strength; it measures it. It does not generate strategic capability; it audits it. It does not produce governance excellence; it forensically examines the gap between governance as declared and governance as practised. What the world's most powerful institutions are discovering, often to their profound discomfort, is that deploying artificial intelligence within a structurally compromised enterprise does not accelerate that enterprise towards excellence; it accelerates its exposure. The speed of the machine amplifies whatever lies beneath, whether that is disciplined excellence or concealed dysfunction, and it does so with a precision that no public relations campaign, no quarterly report, and no board-level optimism can ultimately outrun. This is the confrontation that global leaders have been collectively unprepared to face, and the consequences of that unpreparedness are now compounding at a rate that makes delay not merely imprudent but genuinely indefensible.

The global context in which this reckoning is arriving could scarcely be more demanding. The World Economic Forum's Global Risks Report 2025 ranked AI-generated misinformation and disinformation as the single most severe short-term global risk; a finding that carries a significance far beyond the obvious: it establishes that the primary near-term risk of artificial intelligence is not that it functions too slowly or too inaccurately, but that it functions with sufficient speed and sophistication to amplify whatever is dishonest, whatever is concealed, and whatever is structurally hollow. Against that backdrop, the chief executives, finance ministers, and institutional leaders who are positioning AI as a tool of competitive concealment rather than structural improvement are not navigating prudently within a difficult environment; they are constructing the conditions of their own most spectacular failure.

The Productivity Reckoning: Efficiency Gains and Structural Drag

AI enhances efficiency, yet it simultaneously magnifies inefficiency, creating a paradox of accelerated stagnation where gains in isolated processes are neutralised by systemic bottlenecks. This is local optimisation and global constraint. The evidence from multinational deployments reveals a consistent pattern; firms achieve measurable improvements in discrete functions while failing to translate those gains into enterprise-wide productivity uplift. The reason is structural, not technological. Processes remain fragmented, incentives remain misaligned, and decision rights remain unclear. AI does not correct these failures; it exposes them with precision.

Consider what is actually happening at the frontier of enterprise AI adoption. Organisations that have invested substantially in large language models, predictive analytics, and autonomous decision-making systems are not uniformly reporting gains in competitive advantage; many are reporting a deepening awareness of operational faults that were previously obscured by the tolerant imprecision of human systems. Processes that appeared functional under manual oversight are revealed as inefficient when subjected to algorithmic analysis. Supply chains that appeared resilient prove fragile when exposed to end-to-end visibility. Customer data strategies that appeared coherent dissolve under the scrutiny of machine pattern recognition. This is not a failure of artificial intelligence; this is its success. The diagnostic is working exactly as intended. What is failing is the institutional assumption, held with inexplicable confidence by senior leaders across industries and across continents, that the deployment of intelligence technology is a substitute for the prior work of structural reform, rather than an accelerant of its absence. That assumption is not merely strategically naïve; it is fiscally dangerous, reputationally catastrophic, and, in several emerging-market contexts, economically destabilising.

South African firms face an intensified version of this challenge; operational constraints such as energy instability, logistics inefficiencies, and regulatory complexity create an environment where technological enhancement collides with systemic limitation. This is digital advancement and infrastructural fragility. Consider a financial services firm deploying AI-driven risk modelling; the model may achieve superior predictive accuracy, yet its effectiveness is constrained by data fragmentation and governance inconsistencies. The outcome is partial improvement and systemic underperformance. The strategic conclusion is unavoidable; productivity gains require structural coherence, not technological enthusiasm. Leaders must therefore redesign operating models in parallel with technological adoption. Anything less is performance theatre.

The Forensic Instrument: Why AI Diagnoses Before It Delivers

The fundamental misapprehension that has shaped boardroom AI discourse for the past several years is the conflation of intelligence with intelligence. When executives speak of deploying artificial intelligence, they routinely mean the deployment of a system that will make their organisation smarter, faster, and more capable than it currently is. That ambition is not wrong; it is merely sequentially incorrect. Before any system can make an organisation more capable, it must first understand precisely how capable the organisation currently is, and the process of that understanding is, to borrow the language of medical diagnosis, frequently uncomfortable, occasionally alarming, and almost never flattering. The machine does not share the human capacity for motivated reasoning, the tendency to interpret ambiguous data in the direction of preferred conclusions; it processes what exists rather than what was declared to exist, and the difference between those two realities is, in most organisations, larger than any senior leadership team has been willing to formally acknowledge. This gap between declared capability and actual capability is precisely where structural weakness resides, and it is precisely where artificial intelligence first makes its presence felt.

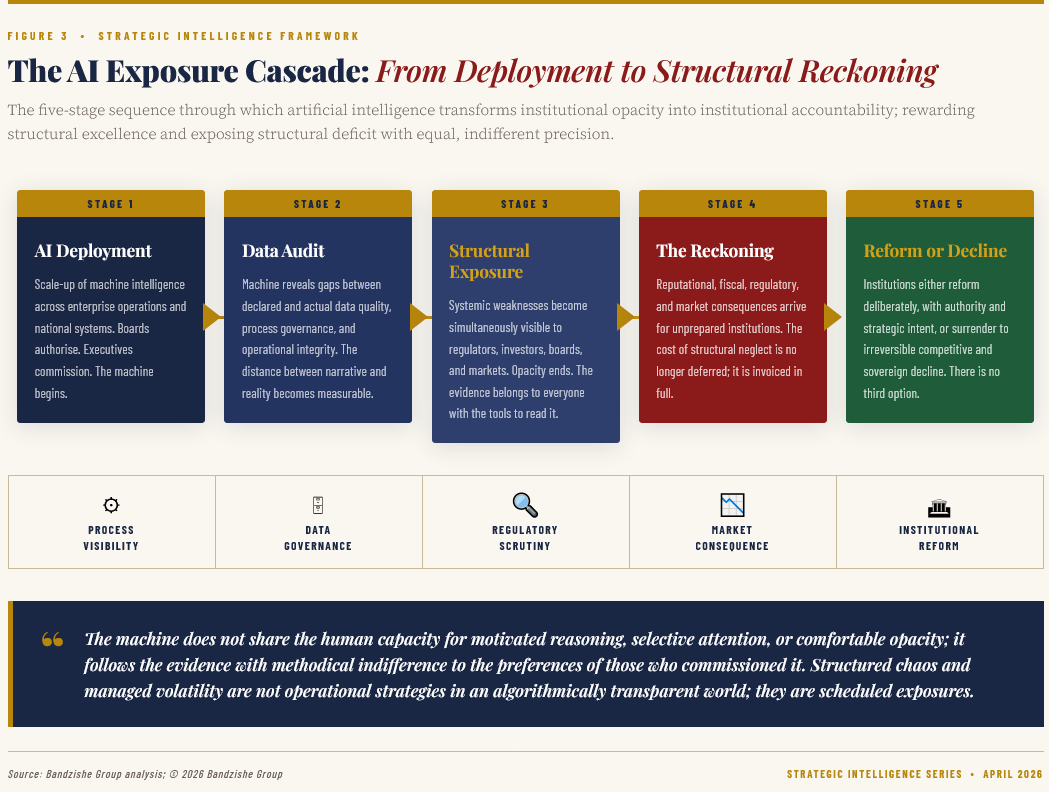

Artificial intelligence, deployed at scale within an enterprise, performs four diagnostic functions simultaneously, each of which exposes a different class of structural inadequacy.

First, it performs a process audit: it identifies, with granular specificity, where operational workflows are redundant, where decision latency is commercially destructive, and where manual interventions are masking systemic design failures.

Second, it performs a data audit: it reveals the quality, consistency, and governance of the information upon which the organisation has been making strategic decisions, and in most cases, that revelation is unflattering in the extreme.

Third, it performs a talent audit: by automating tasks that humans were performing at variable quality and speed, it makes immediately visible the question of what, precisely, the human workforce is capable of that the machine is not, a question to which many organisations have no prepared answer.

Fourth, and most consequentially for the purposes of this analysis, it performs a governance audit: it exposes the distance between the policies an institution claims to enforce and the behaviours it actually tolerates, a distance that is, in weak institutional environments, a chasm rather than a gap. When all four diagnostics arrive simultaneously, as they do when AI is deployed at enterprise scale, the result is not a moment of triumphant technological progress; it is a moment of structural reckoning, and institutions unprepared for that reckoning face consequences that are both operational and reputational.

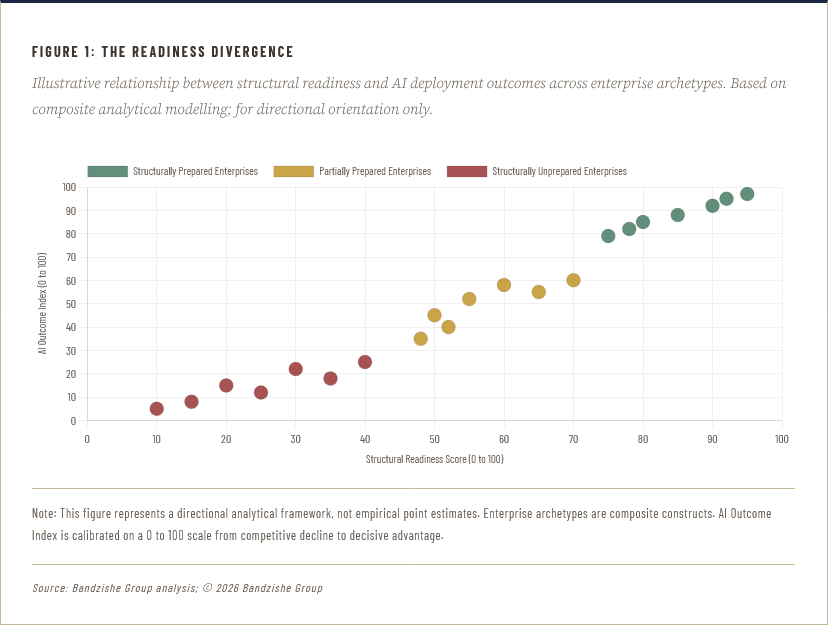

The antithesis at the heart of this diagnostic moment is stark, and must be confronted without equivocation: institutions that have invested in structural excellence before deploying artificial intelligence will find the machine a powerful amplifier of their strength, whilst institutions that have substituted technological ambition for the harder work of institutional reform will find the machine a relentless amplifier of their weakness. Both outcomes are the result of the same deployment; the divergence lies entirely in what was built before the machine arrived. This is the strategic truth that the consulting literature has been reluctant to state with sufficient clarity, perhaps because its implications are commercially inconvenient for firms whose revenues depend upon the sale of AI implementation services irrespective of the structural readiness of the client. The world's most sophisticated chief executives must now accept that the sequence matters enormously: structural reform precedes technological deployment, and not the reverse. To invert that sequence is not bold innovation; it is structured chaos of the least productive variety, a self-inflicted visibility crisis with no algorithmic remedy.

The Structural Deficit Laid Bare: What Enterprise Intelligence Actually Reveals

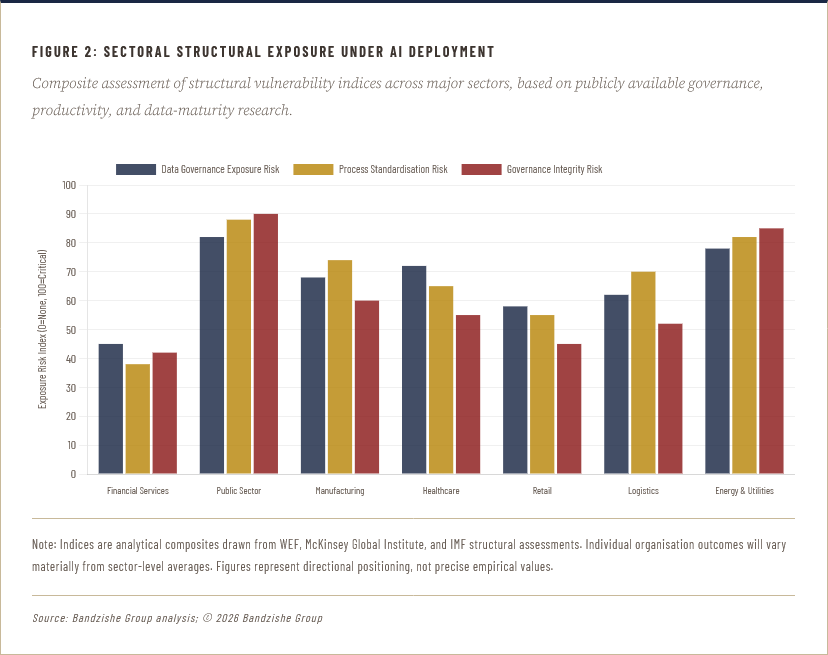

Across every sector in which artificial intelligence has achieved meaningful deployment depth, from financial services and healthcare to logistics, retail, and manufacturing, a pattern has emerged with sufficient consistency to be described as structural rather than coincidental. Organisations with legacy data governance practices, siloed operational divisions, inconsistent performance measurement frameworks, and cultures that reward the appearance of productivity over its substance, find that artificial intelligence does not improve these conditions; it quantifies them, makes them visible to internal and external stakeholders simultaneously, and removes the ambiguity that previously allowed senior leadership to maintain plausible deniability about the organisation's actual state. The machine, by its nature, does not accept narrative as evidence. It accepts data. And when the data contradicts the narrative, as it so frequently does, the resulting exposure can be swifter and more severe than any single operational failure, because it is systemic rather than incidental, and because it arrives accompanied by the authority of algorithmic certainty rather than the contestability of human judgement.

In the financial services sector, several of the world's largest institutions deployed AI-driven risk management and compliance monitoring systems in the years between 2019 and 2023, with the stated intention of improving regulatory performance and reducing operational risk. What those deployments consistently produced, before the competitive advantages arrived, was a visibility crisis: the systems identified, with brutal efficiency, the gap between documented compliance procedures and actual employee behaviour, between stated risk appetite and actual portfolio construction, and between declared customer outcomes and the reality of product mis-selling at scale. These institutions did not become less compliant after deploying AI; they became less able to conceal their existing non-compliance from regulators who now had access to the same analytical tools. The Financial Conduct Authority in the United Kingdom, for instance, has progressively expanded its use of machine-learning tools for market surveillance; a development that has dramatically increased the detection rate of conduct violations that previously went undetected, not because they were rare but because the surveillance tools lacked the bandwidth to find them. This is the regulatory dimension of the AI exposure thesis: the same technology that enterprises are deploying internally is being deployed externally against them, and the structural weaknesses it finds do not remain proprietary intelligence for long.

The manufacturing sector tells an equally instructive story. When global automotive manufacturers began deploying AI-driven quality control and predictive maintenance systems across their production facilities, the initial expectation was a rapid reduction in defect rates and downtime. In the most structurally capable facilities, that expectation was met. In facilities where decades of under-investment in process standardisation, supplier qualification rigour, and engineering talent development had created conditions of managed volatility, the AI systems produced a different outcome: they identified the full extent of the variability that human operators had been compensating for through informal workarounds, tacit knowledge, and situational improvisation. The workarounds themselves, which had been invisible in operational reporting, became visible in AI-generated process maps, and the accumulated institutional dependency on improvisation rather than engineering discipline was exposed as a systemic rather than an incidental risk. This is managed volatility in its most revealing form: the discovery that what appeared to be operational stability was, in reality, organised improvisation at scale, a form of structured chaos that the machine refuses to accept as a permanent operating model.

The Governance Imperative: Weak Institutions Dissolve Under Algorithmic Scrutiny

The intersection of artificial intelligence and governance is where the structural exposure thesis acquires its most consequential dimension, not merely for the enterprise, but for the nation-state. Governance, in its most essential form, is the system through which a political or institutional entity converts its stated values and its formal mandates into actual outcomes in the world; the quality of that conversion determines whether the entity performs its function with integrity or sustains itself through the accumulated momentum of institutional inertia and deliberate opacity. For decades, weak governance has been partially protected by the sheer complexity of the systems it presides over; the difficulty of monitoring, cross-referencing, and analysing the enormous volume of decisions, expenditures, and outcomes that constitute government function has provided a structural shelter within which mediocrity, corruption, and systemic failure have persisted with minimal accountability. Artificial intelligence, deployed in the service of governance monitoring, removes that shelter with a completeness that should alarm every institution whose operational legitimacy depends upon opacity rather than performance.

The World Bank’s 2025 Worldwide Governance Indicators report (WGI 2025 Revision),

which rigorously assesses control of corruption, rule of law, government

effectiveness, and regulatory quality across more than two hundred

economies, reveal a striking and deeply uncomfortable global pattern. It

is an organised instability

wherein the nations with the weakest governance scores are,

disproportionately, the nations that are simultaneously experiencing the

most acute fiscal pressures, the most severe infrastructure deficits,

and the most pronounced inequality of economic outcome. This is not coincidental; it is causal. Weak governance produces precisely the structural conditions, fiscal misallocation, regulatory capture, talent emigration, and investor uncertainty that constrain economic performance, which in turn constrains the fiscal capacity to reform governance, producing a cycle of structured dysfunction that perpetuates itself across political cycles and across decades. The January 2026 Global Economic Prospects reinforces this painful healing by demonstrating that the chasm between institutional quality and economic survival has reached a point of stable fragility. Whilst advanced economies project a facade of vulnerable strength, developing nations with low absolute governance scores remain trapped in a cycle of prosperous poverty. These states must navigate the calculated spontaneity of global capital markets whilst remaining tethered to the solid fluid of outdated infrastructure.

The arrival of AI-enabled governance monitoring into this environment does not break the cycle automatically, but it does, for the first time in many contexts, make the cycle entirely visible to those who have the power, the means, and the motivation to interrupt it. International investors, multilateral institutions, and civil society organisations now have access to analytical tools that can identify governance failure at a granularity and a speed that previously required years of investigative effort. The accountability consequences of that shift are not theoretical; they are already reshaping sovereign credit assessments, foreign direct investment decisions, and development finance allocations in ways that structurally weak governments have been consistently underestimating.

The antithesis that defines this moment in global governance is this: AI does not discriminate between the governance it was deployed to improve and the governance it was deployed to protect from scrutiny; it examines both with the same methodical indifference to the preferences of those who commissioned it. A state that deploys AI in its public procurement systems to detect vendor fraud will simultaneously generate evidence of political interference in contract awards. A central bank that deploys machine learning to identify financial crimes will simultaneously generate evidence of regulatory forbearance extended to politically connected institutions. A regulatory body that deploys predictive analytics to identify market manipulation will simultaneously generate evidence of the inadequacy of its own historical enforcement. The machine does not share the human capacity for selective attention; it follows the evidence wherever it leads, and in weak institutional environments, it leads with remarkable consistency to the mechanisms of systemic self-perpetuation. This is not a theoretical risk; it is the predictable operational outcome of deploying a tool of radical transparency within a system that has been engineered, consciously or by accretion, to resist precisely that transparency.

The South African Reckoning: A Nation Confronting the Mirror of Its Own Making

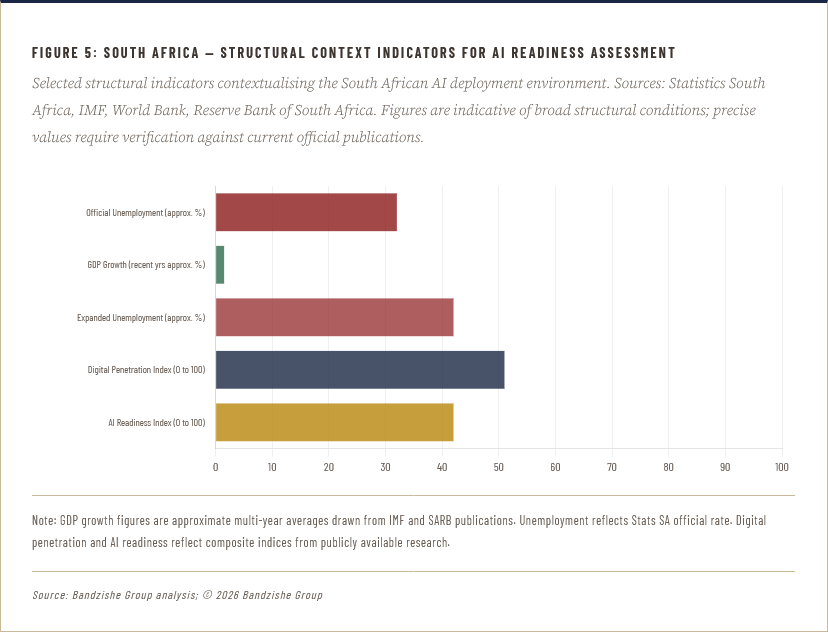

No economy on the African continent, and few in the emerging-market world, illustrates the structural exposure thesis with greater instructive clarity than South Africa. The country possesses, in unusual combination, several of the characteristics that make the arrival of AI-enabled scrutiny most consequential: a sophisticated formal financial sector operating alongside profound structural inequality; a public sector of considerable institutional capacity operating alongside chronic service-delivery failure; a population of exceptional educational and entrepreneurial talent operating alongside an official unemployment rate that Statistics South Africa has consistently measured above 32 per cent, with the expanded definition exceeding 42 per cent. These are not contradictions; they are the symptoms of a structural fracture that has persisted across administrations and across decades, and that the comfortable ambiguity of pre-digital governance has allowed to be managed narratively rather than addressed systemically. The digital scrutiny now available to markets, rating agencies, multilateral institutions, and domestic civil society removes that ambiguity with a finality that should concentrate every mind in government, in the boardroom, and in the institutional leadership of every major South African organisation.

The energy crisis that defined South Africa's economic trajectory through the early-to-mid 2020s was, in essence, a structural exposure event that preceded the widespread deployment of AI, but which demonstrated, with painful clarity, the mechanism that AI will replicate at scale and in real time. Eskom, the state-owned power utility, had for years presented operational narratives to government, to the public, and to financial markets that did not accurately reflect the extent of generation capacity deterioration, procurement irregularity, and maintenance deferred beyond any point of prudent engineering judgement. The eventual visibility of those realities, forced by the physical impossibility of continuing to conceal the gap between declared capacity and actual output, produced consequences that were neither contained nor merciful: sustained rolling power cuts that the Reserve Bank and independent economic analysts estimated significantly constrained South Africa's GDP growth across multiple consecutive years, accelerated capital outflows, downgraded sovereign credit assessments, and an investment climate characterised by the particular wariness that comes when investors conclude that the narrative and the reality of a major institution cannot be reconciled. This is precisely the exposure dynamic that AI-enabled monitoring will replicate across every structurally weak institution in the country, and it will replicate it faster, more comprehensively, and with a granularity of evidence that the physical deterioration of power stations could not match.

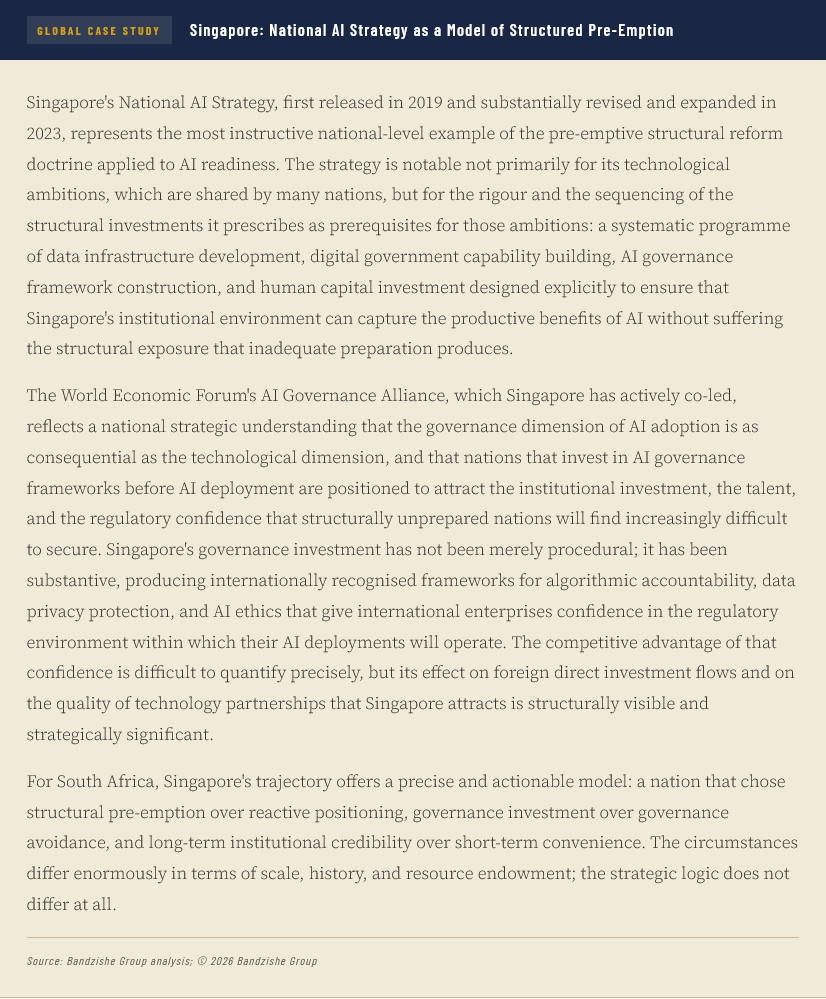

The opportunity for South Africa, however, is as significant as the risk, and it would be a counsel of despair rather than of strategic clarity to present the diagnostic without the remedy. The country's private sector, and specifically its financial services and telecommunications industries, has demonstrated that structural excellence and genuine AI readiness are achievable within the South African context. FirstRand Group, the JSE-listed financial services conglomerate, has invested systematically in data governance, talent development, and the organisational conditions of analytical maturity, positioning itself among the more structurally prepared financial institutions in the emerging-market universe for the transition to AI-augmented operations. Telecommunications operators have deployed machine-learning systems for network optimisation and fraud detection across multiple African jurisdictions, generating the operational experience and data infrastructure that creates genuine competitive advantage when AI deployment scales. These are not isolated examples of corporate sophistication; they are proof points that the structural preparation AI requires is achievable in the South African context, and that the obstacle to broader progress is not the absence of capacity but the absence of the institutional will to prioritise structural integrity over the more immediate rewards of managed opacity.

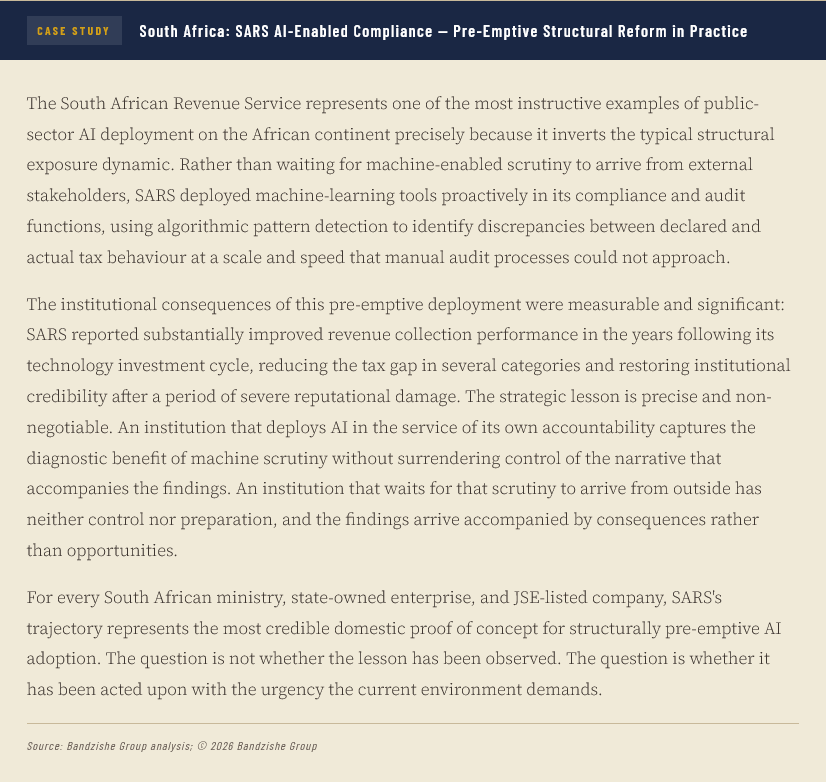

The National Development Plan, South Africa's long-term strategic framework, articulates ambitions that are structurally coherent and analytically defensible; the distance between those articulated ambitions and measurable national outcomes is itself a structural exposure waiting to be quantified by the analytical tools that multilateral institutions and international investors are deploying with increasing sophistication. The South African Revenue Service has, it should be noted, deployed AI-assisted compliance monitoring with measurable success in identifying tax evasion patterns at scale, a development that demonstrates both the institutional capacity for effective AI deployment within the public sector and the precision with which such deployment exposes the gap between declared fiscal compliance and actual behaviour. This is the productive version of the AI exposure thesis: a government that deploys AI in the service of its own accountability frameworks is one that is pre-empting the more damaging external exposure that follows from the alternative. The distinction between deploying AI as a tool of self-imposed discipline and waiting for AI to be deployed against you as a tool of external accountability is the strategic choice that every South African public and private institution now faces, and it is a choice with consequences that cannot be deferred much longer.

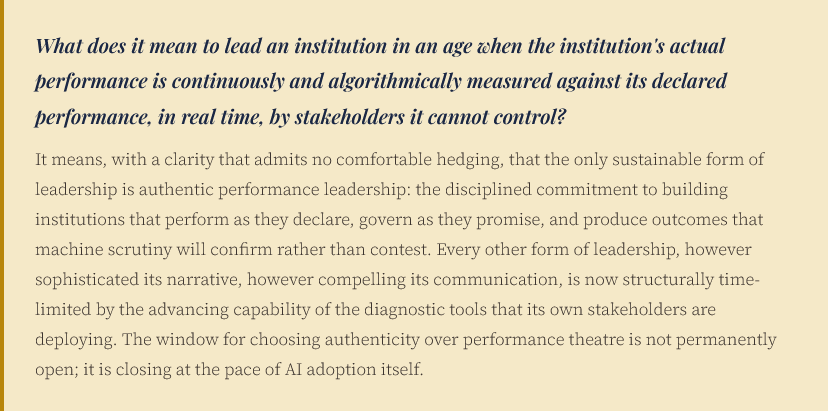

The Leadership Paradox: Managed Volatility in an Age of Machine Transparency

The leaders who will navigate the AI exposure era with distinction are precisely those who resist the comfortable assumption that technological deployment is a substitute for the harder, slower, less glamorous work of institutional design. This is the leadership paradox of the current moment, and it is a paradox that deserves to be stated without diplomatic softening: the executives most confident in their AI adoption strategies are, in several cases, the executives most exposed to AI's diagnostic consequences, because confidence in technological deployment without prior investment in structural preparation is not strategic sophistication; it is strategic theatre, performed for boards and shareholders and analysts with tools that will, in due course, review their own performance with the same precision they were purchased to apply to everything else. The chief executives and board members who emerge with institutional credibility intact from this period will be those who confronted the diagnostic evidence early, communicated it honestly to their stakeholders, and invested in the structural reforms that the evidence demanded, rather than those who deployed the technology with the greatest speed and the least accompanying self-examination.

The concept of managed volatility, which has currency in risk management discourse as a description of environments in which uncertainty is contained within defined bounds through deliberate design, acquires a new and uncomfortable meaning in the AI transparency context. In the pre-AI governance environment, managed volatility described what structurally weak institutions actually practised: not the absence of dysfunction, but its containment within the bounds of narrative control. The volatility was real; the management was the management of its visibility rather than its substance. AI-enabled transparency terminates that form of management with a completeness that has no historical precedent, because it operates continuously, at machine speed, across the entirety of an organisation's or nation's data environment, without the capacity for selective attention that human oversight systems routinely apply. When the machine is running, the volatility cannot be managed through narrative; it can only be managed through the elimination of the structural conditions that produce it. This requires a fundamental recalibration of what leadership means: from the management of perception to the engineering of performance, from the orchestration of strategic narratives to the delivery of strategic outcomes, and from the tolerance of managed dysfunction to the intolerance of dysfunction in any form.

The global evidence for this recalibration is already visible in the differential performance of enterprises that invested in data governance, process standardisation, and organisational transparency as prerequisites for AI adoption, versus those that did not. JPMorgan Chase's AI investment, which the institution has publicly described as one of the most substantial in the global financial services sector, has been accompanied by equally substantial investment in the data engineering and governance infrastructure, without which machine-learning systems cannot function with the accuracy that creates competitive rather than reputational advantage. Amazon's operational AI capabilities, which underpin its logistics and customer experience performance at a scale that no competitor has yet matched, rest upon decades of process engineering discipline that preceded the AI deployment by years and, in many cases, by decades. These are not enterprises that deployed AI and discovered structural strength; they are enterprises that built structural strength and thereby earned the right to deploy AI productively. The sequence is not incidental; it is the entire strategic argument.

The Productivity Mirage: When Operational Efficiency Disguises Systemic Decay

One of the most seductive and most dangerous forms of institutional self-deception in the current environment is the conflation of operational efficiency with structural health. An organisation can be operationally efficient in the sense of performing its existing activities with minimal waste, while simultaneously being structurally deteriorating in the sense of performing the wrong activities with increasing excellence. The classic illustration is the enterprise that optimises its legacy business model with machine precision whilst the market's requirements evolve beyond that model's relevance; the efficiency gains are real, the structural exposure is also real, and the AI systems that deliver the efficiency also, if deployed with appropriate analytical breadth, identify the structural obsolescence. McKinsey Global Institute's research on AI and productivity has consistently highlighted the gap between the productivity gains achievable through AI deployment and the productivity gains that organisations actually realise, and the primary explanation for that gap is not technological limitation but structural unreadiness: organisations that lack the data quality, the process standardisation, and the organisational alignment that AI requires in order to deliver its full productive potential.

The productivity mirage manifests with particular acuity in organisations where the performance management system has been designed, consciously or otherwise, to reward activity rather than outcome, effort rather than impact, and complexity rather than clarity. These organisations generate enormous volumes of structured busyness that, when subjected to AI-enabled outcome analysis, reveal a striking and uncomfortable truth: a substantial proportion of the activity that consumes organisational resources produces no measurable contribution to the outcomes the organisation exists to deliver. This is structured chaos in its most institutionally entrenched form: a system that has organised the appearance of productivity with such sophistication that it has become genuinely difficult, within the system itself, to distinguish productive from unproductive activity. The machine has no such difficulty. It follows the data from input to outcome and identifies, with a precision that no management review has previously achieved, exactly which activities produce which results, which processes consume resources without generating value, and which functions are, in the language of strategic management, theatre rather than substance. For every organisation that has built its internal culture around the performance of effort rather than the delivery of outcomes, the arrival of AI-enabled productivity analytics is not an opportunity; it is a reckoning, and it is a reckoning that will arrive whether or not the organisation's leadership has chosen to prepare for it.

The global context of this productivity crisis is well-documented and deeply serious. The IMF and the OECD have both noted, in multiple publications across the past decade, that advanced-economy productivity growth has been structurally below its pre-2008 trend, a phenomenon that academic economists describe as the productivity paradox of the digital age: the simultaneous acceleration of technological capability and deceleration of measured economic productivity. The explanation that holds greatest analytical persuasiveness is not that technology is failing to deliver productive gains, but that the institutional conditions required to capture those gains, including the structural reforms, the governance improvements, and the human capital investments that technology's benefits require, have not been made at the scale or the speed the opportunity demands. Artificial intelligence, deployed within this environment of structural underpreparedness, does not resolve the paradox; it amplifies it, delivering greater diagnostic clarity about the gap between potential and realised productivity whilst simultaneously making that gap more visible, and therefore more consequential, to the stakeholders who hold the power to respond to it. The nations and the enterprises that close the gap before the machine makes it fully public will capture the productive upside of AI without the reputational and fiscal costs of the structural exposure. Those that do not will face both the diagnostic and its consequences simultaneously.

The Strategic Doctrine: Pre-Emptive Reform as the Only Defensible Position

The strategic argument this analysis has constructed leads to a single and inescapable doctrinal conclusion: in an era of AI-enabled institutional transparency, the only defensible strategic position is pre-emptive structural reform, the deliberate, sequenced, and sustained programme of institutional improvement that precedes AI deployment rather than following it, and that treats the machine's future diagnostic findings as the design brief for the organisation's present reform agenda. This is not a counsel of perfection; no institution achieves structural excellence without iterative effort and sustained investment. It is a counsel of urgency and of sequence: begin the structural work now, before the machine's diagnostic amplifies the consequences of its absence, and design the reform programme explicitly around the dimensions of structural readiness that AI deployment will expose. Institutions that follow this sequence will find AI a powerful ally; those that invert it will find AI a relentless adversary, not because the machine is hostile but because the evidence it generates is accurate, and accuracy, in the presence of structural weakness, is the most consequential form of adversity.

The practical implications of this doctrine differ by context, and any analysis that prescribes identical remedies for a Fortune 500 technology company and a sub-Saharan African ministry of finance is an analysis that has sacrificed strategic utility for intellectual convenience. For global enterprises operating in advanced-economy markets with sophisticated regulatory environments, the pre-emptive reform agenda centres on data governance, talent strategy, and the cultural conditions that allow AI's diagnostic findings to be received as improvement intelligence rather than institutional indictments. For emerging-market enterprises, and particularly for South African organisations operating in the current environment, the agenda is more foundational: it includes the basic conditions of operational discipline, the elimination of systemic dysfunction that has been normalised through decades of under-accountability, and the investment in human capital that allows organisations to engage with AI as a tool of strategic development rather than an instrument of competitive exposure. Both agendas are demanding. Both are, in the current environment, non-negotiable.

For global consulting engagements, the pre-emptive reform doctrine translates into a precise and sequenced advisory framework that this analysis commends to every enterprise facing the AI deployment decision. The first priority is a structural readiness audit, an honest and forensic assessment of the organisation's data quality, governance integrity, process standardisation, and talent capability against the specific requirements of the AI deployment it is contemplating. The second priority is a gap-closure programme, a time-bounded initiative with measurable milestones that addresses the structural deficits identified in the audit before AI deployment begins at scale. The third priority is a parallel AI pilot programme, deployed in the areas of greatest structural readiness to generate early performance evidence, develop institutional AI literacy, and build the cultural confidence that successful structural-AI integration requires. The fourth priority is a continuous diagnostic loop, in which AI's ongoing analytical outputs are systematically reviewed by leadership not as performance scorecards but as structural improvement guides, treated with the same seriousness and the same institutional authority as a financial audit or a regulatory inspection. Enterprises that execute this sequence with discipline will find that AI's diagnostic function becomes, over time, not a source of institutional risk but its most powerful instrument of continuous improvement.

For South African enterprises specifically, several additional dimensions of strategic action are warranted by the country's particular structural context. The energy and infrastructure constraints that have characterised the South African business environment require that AI deployment strategies account explicitly for the operational variability those constraints introduce, ensuring that machine-learning models trained on South African operational data are not systematically distorted by infrastructure disruptions that will diminish in frequency as the structural energy reform programme advances. The talent constraints imposed by decades of educational inequality require that AI adoption strategies are accompanied by deliberate and sustained investment in digital skills at every level of the workforce, ensuring that the employment consequences of automation are managed with strategic intentionality rather than allowed to compound an unemployment crisis that the country can ill afford to deepen. And the governance constraints of the public sector require that private-sector AI adoption is accompanied by active engagement with the regulatory and policy environment, ensuring that the institutions responsible for the country's competitive positioning understand the structural implications of AI deployment with sufficient clarity to design intelligent and enabling policy responses.

The Implementable Imperative: Pathways That Compel Boards to Act Without Hesitation

Strategic analysis that does not translate into action is not strategic analysis; it is intellectual entertainment, and the world's most consequential problems are not solved by audiences who found the argument compelling but took no action in response. This section, therefore, offers a set of implementable pathways that boards, chief executives, finance ministers, and institutional leaders can deploy immediately, not as aspirations for the medium term but as decisions for the current quarter. The urgency is not rhetorical; AI adoption is accelerating at a pace that renders the deferral of structural preparation an increasingly costly strategic error, and the compounding of that cost is not linear but exponential, because the diagnostic evidence accumulates, the stakeholder awareness grows, and the window for pre-emptive action narrows with each passing cycle of deployment.

The first implementable pathway is the Structural Exposure Audit, a board-commissioned, independently conducted assessment of the organisation's structural readiness for AI deployment across the seven dimensions identified in the framework above, namely data governance, process standardisation, talent capability, governance integrity, strategic measurement, innovation culture, and regulatory literacy. This audit must be conducted with the same rigour and the same independence as a financial audit, must report directly to the board rather than through management, and must produce findings that are treated as material to the organisation's strategic risk assessment rather than as advisory recommendations. For South African organisations, this audit should additionally assess the specific structural dimensions of the country's operating environment, including energy resilience, currency volatility management, and skills pipeline adequacy, that will determine the extent to which AI deployment amplifies competitive advantage or operational exposure. The audit is the beginning, not the conclusion; its value lies entirely in the quality of the structural reform programme it informs.

The second implementable pathway is the Governance Integrity Programme, a time-bounded initiative, no longer than eighteen months in its initial phase, that closes the single most consequential structural gap that AI deployment will expose: the distance between declared governance standards and actual governance practice. For enterprises, this means a systematic review of every policy, every procedure, and every performance management mechanism that governs behaviour within the organisation, followed by the disciplined closure of every gap between what the policy declares and what the data shows. For governments, it means the same rigour applied to public expenditure management, procurement practice, and public service performance accountability, with the explicit recognition that AI-enabled monitoring by multilateral institutions, rating agencies, and international investors will render these gaps visible regardless of whether the government chooses to close them proactively or not. The strategic choice between proactive closure and reactive exposure is, in the current environment, the most consequential governance decision that any institution's leadership will make.

The third implementable pathway is the Human Capital Renewal Programme, which addresses what is, in several respects, the most structurally urgent dimension of AI readiness and the one most consistently underestimated by leaders who focus on the technological rather than the human dimensions of the transition. The deployment of artificial intelligence at enterprise scale does not merely change the tasks that human workers perform; it changes the fundamental basis upon which human contribution creates value. In an AI-augmented environment, the human competitive advantage lies not in the performance of routine cognitive tasks, which machines execute with greater speed, consistency, and scale, but in the application of judgement, creativity, contextual wisdom, ethical reasoning, and relational intelligence to problems that machines can process but cannot resolve without human guidance and oversight. Building an enterprise workforce capable of operating at that level of human intelligence is not a training programme; it is a strategic investment in the conditions of sustained human relevance within an AI-enabled economy, and it requires the commitment of leadership attention, financial resource, and organisational time at a scale that most enterprises have not yet accepted as necessary.

The Civilisational Stakes: Economic Resilience as the Foundation of Legitimate Leadership

Economic growth, at its deepest level of strategic significance, is not a macroeconomic variable; it is a civilisational force that determines the social cohesion of nations, the geopolitical relevance of states, the technological sovereignty of industries, the fiscal durability of governments, and the legitimacy of the leaders who claim the mandate to govern. When artificial intelligence makes the structural weakness of an enterprise or a nation visible to the markets, the investors, and the citizens who had previously accepted the narrative of institutional competence, the consequence is not merely financial; it is political, social, and civilisational. A nation whose structural weaknesses are exposed by AI in the view of international capital markets does not merely lose investment; it loses the credibility that legitimate governance requires. A corporation whose structural dysfunctions are quantified by machine analytics in the view of institutional shareholders does not merely lose market capitalisation; it loses the stakeholder trust without which sustainable enterprise is ultimately impossible. The stakes of the AI exposure era are, therefore, not merely strategic; they are existential for institutions that have built their authority upon the concealment of structural weakness rather than the engineering of structural strength.

The nations and the enterprises that will emerge from the AI transition with their authority enhanced rather than diminished are those that embrace the diagnostic function of machine intelligence not as a threat to be managed but as an opportunity to be seized. They are the institutions that look into the mirror the machine holds up and respond not with denial but with the disciplined rigour of structural reform; the institutions whose leaders possess the intellectual courage to confront the distance between declared and actual performance, and the strategic clarity to close that distance before the market forces the confrontation. They are the institutions, in other words, that understand that the most intelligent systems do not think like humans; they help humans think better. And when the thinking is finally done with the ruthless precision that artificial intelligence enables, the institutions that have been thinking honestly, building authentically, and governing with integrity will find, in AI's diagnostic mirror, not the exposure of their weakness but the confirmation of their strength.

The antithesis of this moment is, in its historical scope, without precedent: artificial intelligence simultaneously represents the most powerful instrument of competitive advantage ever made available to human institutions and the most comprehensive mechanism of institutional accountability ever deployed against them. Every board that has tolerated structural mediocrity on the grounds that it was invisible to external scrutiny must now reckon with the fact that the invisibility is ending. Every government that has governed through the opacity of bureaucratic complexity must reckon with the fact that the opacity is dissolving. Every enterprise that has reported performance through the selective presentation of favourable metrics must reckon with the fact that the selectivity is no longer available. The machine does not accept opacity as a governance strategy; it accepts only data, and the data, when properly interrogated, tells the truth. The question, and it is the most consequential strategic question of the current era, is whether the world's institutions will choose to tell that truth themselves, proactively, with the authority of self-imposed accountability, or whether they will wait for the machine to tell it for them, with the authority of algorithmic certainty and the accompanying consequence of market-imposed correction.

Images by Bandile Ndzishe of Bandzishe Group

About bandile ndzishe

Bandile Ndzishe is the CEO, Founder, and Global Consulting CMO of Bandzishe Group, a premier global consulting firm distinguished for pioneering strategic marketing innovations and driving transformative market solutions worldwide. He holds three business administration degrees: an MBA, a Bachelor of Science in Business Administration, and an Associate of Science in Business Administration.

With over 30 years of hands-on expertise in marketing strategy, Bandile is recognised as a leading authority across the trifecta of Strategic Marketing, Daily Marketing Management, and Digital Marketing. He is also recognised as a prolific growth driver and a seasoned CMO-level marketer.

Bandile has earned a strong reputation for delivering strategic marketing and management services that guarantee measurable business results. His proven ability to drive growth and consistently achieve impactful outcomes has established him as a well-respected figure in the industry.

As an AI-empowered and an AI-powered marketer, I bring two distinct strengths to the table: empowered by AI to achieve my marketing goals more effectively, whilst leveraging AI as a tool to enhance my marketing efforts to deliver the desired growth results. My professional focus resides at the nexus of artificial intelligence and strategic marketing, where I explore the profound and enduring synergy between algorithmic intelligence and market engagement.

Rather than pursuing ephemeral trends, I examine the fundamental tenets of cognitive augmentation within marketing paradigms. I analyse how AI's capacity for predictive analytics, bespoke personalisation, and autonomous optimisation precipitates a transformative evolution in consumer interaction and brand stewardship. By extension, I seek to comprehend the strategic applications of artificial intelligence in empowering human capability and fostering innovation for sustainable societal advancement.

In essence, I explore how AI augments human decision-making and strategic problem-solving in both marketing and other domains of life. This is not merely an interest in technological novelty, but a rigorous investigation into the strategic implications of AI's integration into the contemporary principles of marketing practice and its potential to reshape decision-making frameworks, rearchitect strategic problem-solving paradigms, enhance strategic foresight, and influence outcomes in diverse areas beyond the marketing sphere.